NVIDIA releases major leap forward in graphics rendering

Tue, 14th Aug 2018

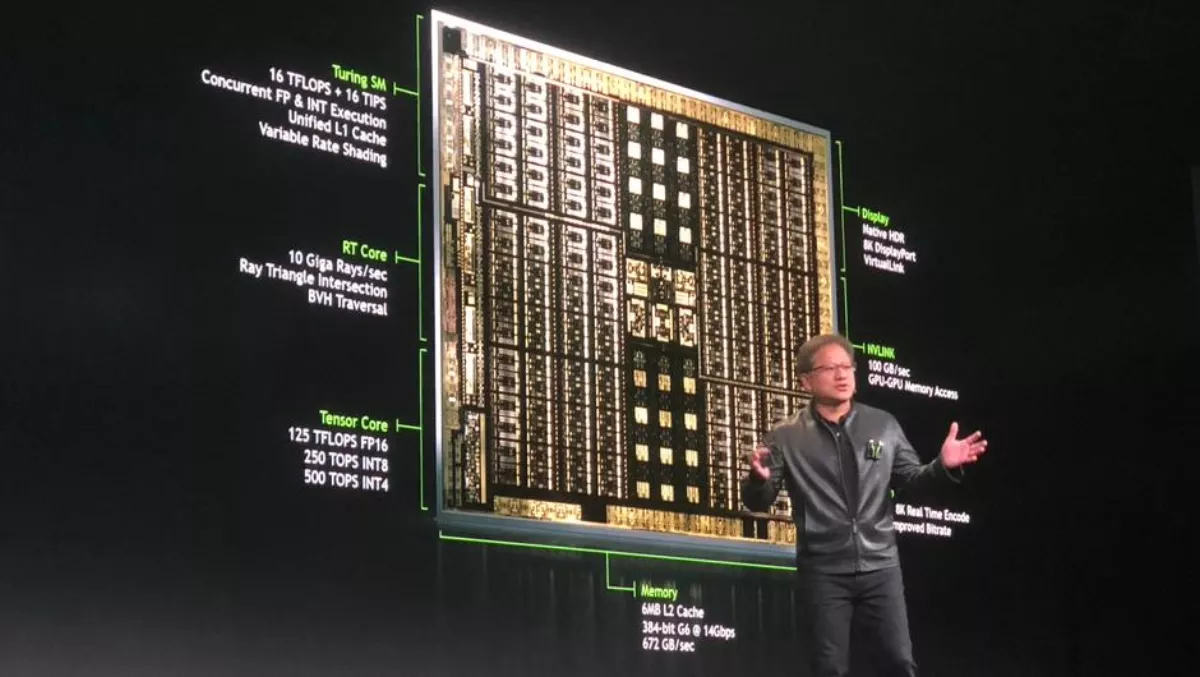

NVIDIA has released the Turing GPU architecture which promises a fundamental shift in computer graphics by fusing real-time ray tracing, AI, simulation and rasterisation.

Turing features new RT Cores to accelerate ray tracing and new Tensor Cores for AI inferencing which, together for the first time, make real-time ray tracing possible.

These two engines - along with more powerful compute for simulation and enhanced rasterisation - usher in a new generation of hybrid rendering to address the $250 billion visual effects industry.

The company also unveiled its initial Turing-based products - the Quadro RTXTM 8000, Quadro RTX 6000 and Quadro RTX 5000 GPUs.

"Turing is NVIDIA's most important innovation in computer graphics in more than a decade," says NVIDIA CEO and founder Jensen Huang speaking at SIGGRAPH 2018 in Vancouver today.

"Hybrid rendering will change the industry, opening up amazing possibilities that enhance our lives with more beautiful designs, richer entertainment and more interactive experiences. The arrival of real-time ray tracing is the Holy Grail of our industry.

Turing, NVIDIA's eighth-generation GPU architecture, is the world's first ray-tracing GPU and the result of more than 10,000 engineering-years of effort.

By using Turing's hybrid rendering capabilities, applications can simulate the physical world at 6x the speed of the previous Pascal generation.

To help developers take full advantage of these capabilities, NVIDIA has enhanced its RTX development platform with new AI, ray-tracing and simulation SDKs.

"This is a significant moment in the history of computer graphics," says analyst firm JPR CEO Jon Peddie.

"NVIDIA is delivering real-time ray tracing five years before we had thought possible.

The Turing architecture is complete with dedicated ray-tracing processors called RT Cores, which accelerate the computation of how light and sound travel in 3D environments at up to 10 GigaRays a second.

Turing accelerates real-time ray tracing by up to 25x that of the previous Pascal generation, and GPU nodes can be used for final-frame rendering for film effects at more than 30x the speed of CPU nodes.

The Turing architecture also features Tensor Cores, processors that accelerate deep learning training and inferencing, providing up to 500 trillion tensor operations a second.

This level of performance powers AI-enhanced features for creating applications with powerful new capabilities.

These include DLAA - deep learning anti-aliasing, which is a breakthrough in high-quality motion image generation - denoising, resolution scaling and video re-timing.

These features are part of the NVIDIA NGXTM software development kit, a new deep learning-powered technology stack that enables developers to easily integrate accelerated, enhanced graphics, photo imaging and video processing into applications with pre-trained networks.

Turing-based GPUs feature a new streaming multiprocessor (SM) architecture that adds an integer execution unit executing in parallel with the floating point datapath, and a new unified cache architecture with double the bandwidth of the previous generation.

Combined with new graphics technologies such as variable rate shading, the Turing SM achieves unprecedented levels of performance per core.

With up to 4,608 CUDA cores, Turing supports up to 16 trillion floating point operations in parallel with 16 trillion integer operations per second.

Developers can take advantage of NVIDIA's CUDA 10, FleX and PhysX SDKs to create complex simulations, such as particles or fluid dynamics for scientific visualisation, virtual environments and special effects.